The most critical security risk is prompt injection. Similar to SQL injection, it allows attackers to slip commands into what looks like normal input. The difference is that with LLMs, there is no standard way to isolate or escape input. Anything the model sees, including user input, search results, or retrieved documents, can override the system prompt or event trigger tool calls.

If you are building an agent, you must design for worst case scenarios. The model will see everything an attacker can control. And it might do exactly what they want.

Subscribe to Vercel Daily for unlimited access to all articles and exclusive content.

Fluid compute is Vercel’s next-generation compute model designed to handle modern workloads with real-time scaling, cost efficiency, and minimal overhead.

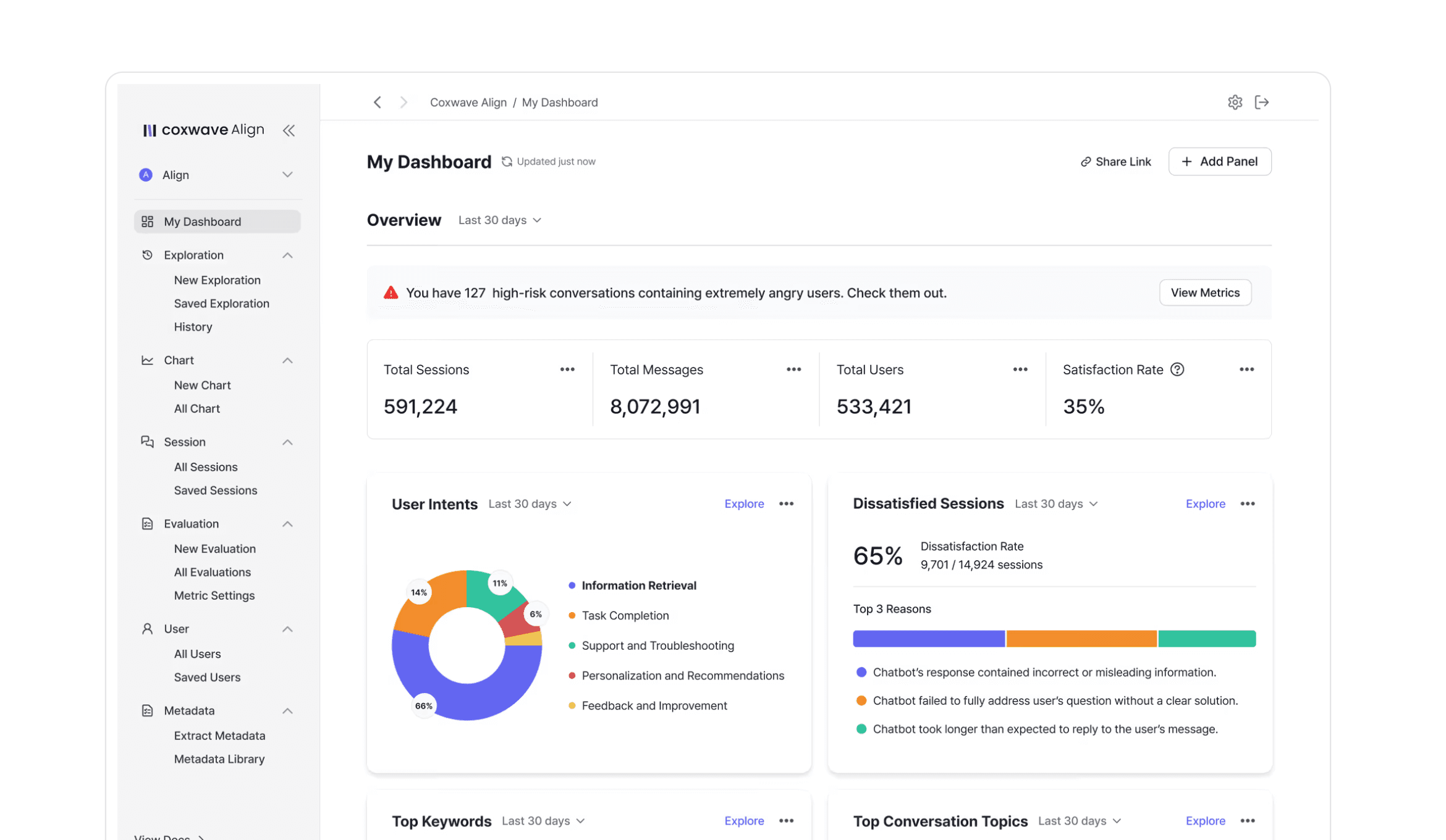

Read more about Coxwave's journey to cutting deployment times by 85% and building AI-native products faster with Vercel

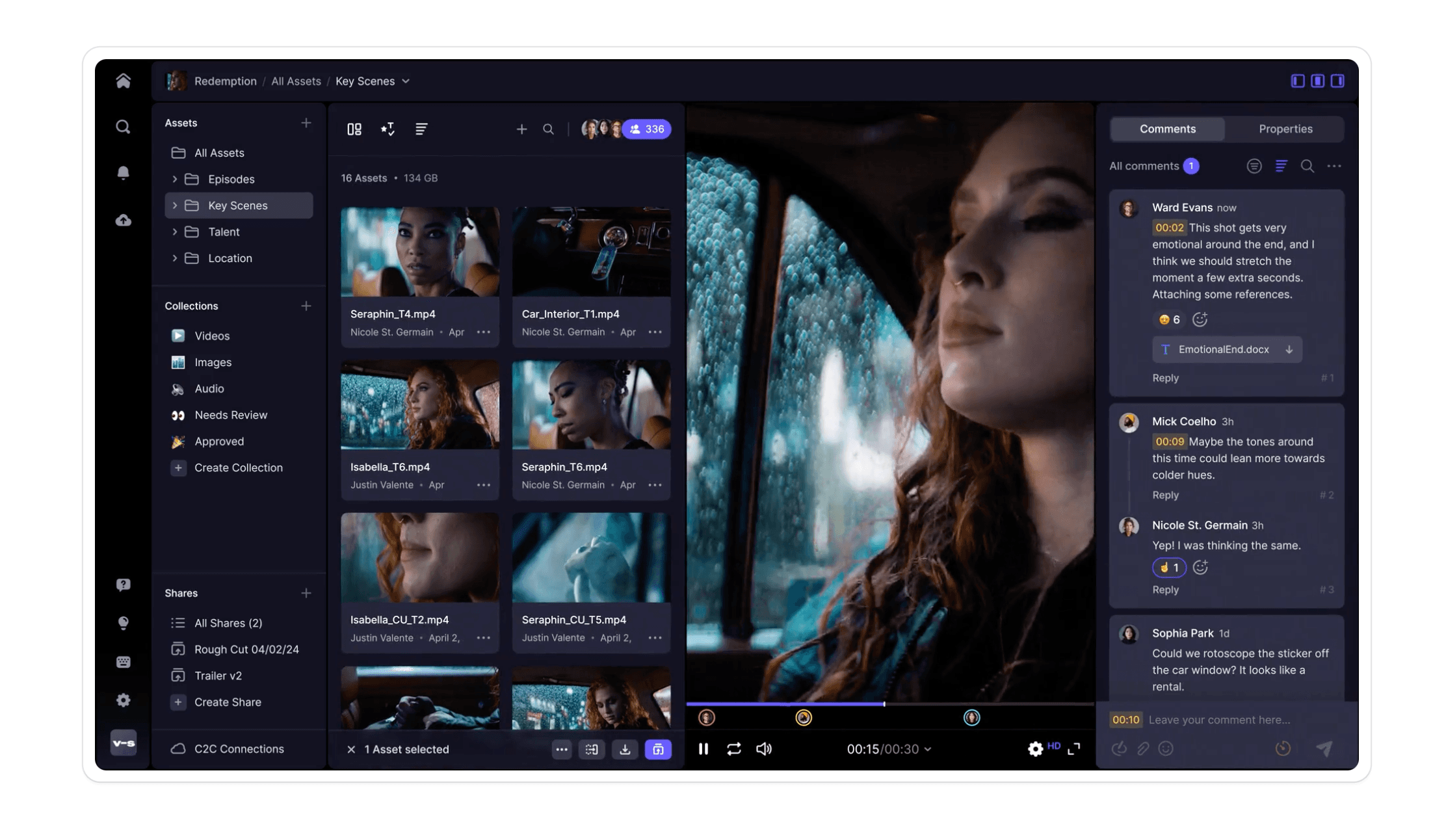

Frame.io's users "see in milliseconds," so every interaction, animation, and frame within a user's web experience matters.

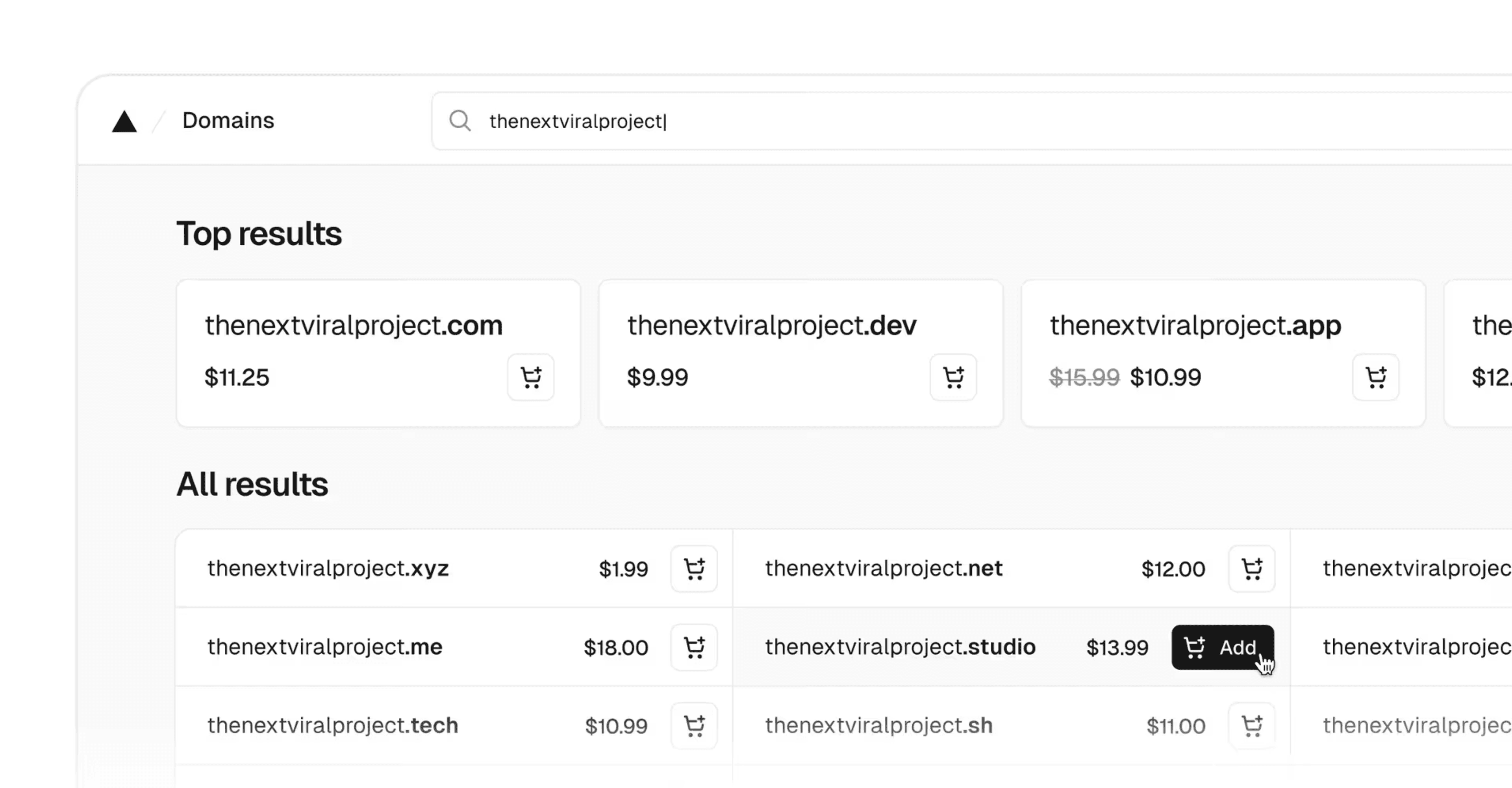

We’ve rebuilt Vercel Domains end to end, making it faster, simpler, and more affordable to find and buy the right domain for your project.